- Blog

- Home

- Fifa street 3 xbox download

- Altium 10 license file

- 10-7 as a fraction

- Gambar kapal perang tni al

- Highway 2014 bengali film

- Yamaha yts 62 price

- Ae pixel sorter crack

- Dx4win lotw

- Iso for microsoft office 2003 professional

- Enable developer tab excel 2013

- Pokemon gba hacks 2020

- Philips smart card reader driver

- Cheap used blu ray movies

- Scipy optimize curve fit

- Vlc media player download

- Installing elgato hd60 pro

- God of war 1 pc space in hard disk

- Watch pirates of the caribbean stranger tides free

- Panotour pro 2-5 tutorial stitching

- Express scribe free version file types

- Microsoft silverlight download windows 7 64 bit

- How to change document size in word

- Best way to post video on reddit

- How to change screen on macbook pro

- Best free photo editing software windows 10

- P2 card reader best buy

- Free online screen sharing sites

- Magic bullet denoiser iii crack

- Teamviewer license code generator free

- The sims 4 spooky day and seasons

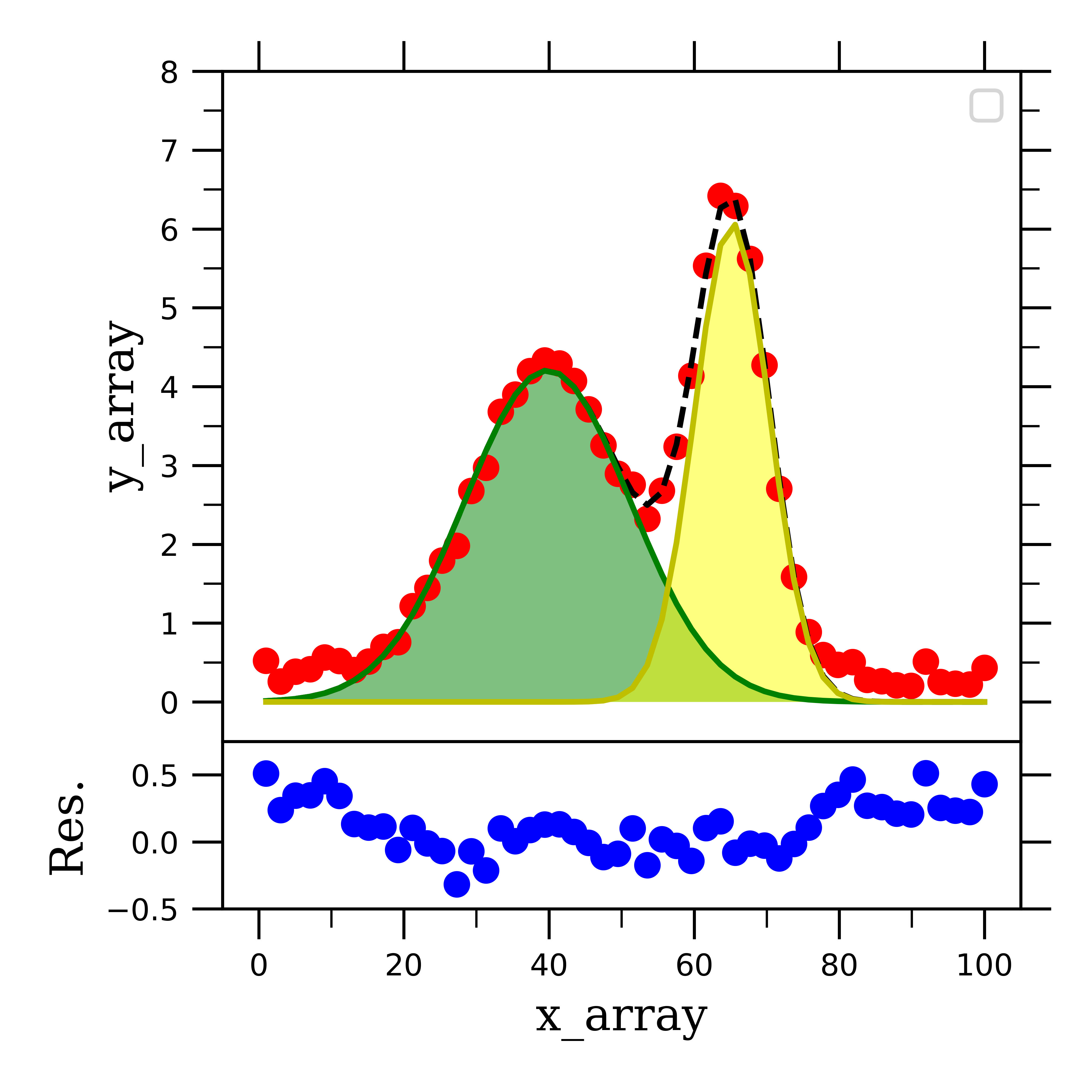

Residuals = y - linear_fit(x,params2,params2) Standarddevparams2 = np.sqrt(np.diag(covariance2)) I use it all the time for simpler fittings (linear, exponential, power etc). What if we want some statistics parameters ($R^2$)?Īlthough covariance matrix provides information about the variance of the fitted parameters, mostly everyone uses the standard deviation and coefficient of determination ($R^2$) value to get an estimate of ‘goodness’ of fit. Red line is vanilla fitting, Blue is the fit forced to pass thorough zero. Plt.plot(x, linear_fit(x,params2,params2),c='blue',ls='-',lw=2)įitted lines to the dummy linear data. curve_fir doesn't have an argument to make a parameter fixed, so I gave a very small value to c to force is close to 0.ArithmeticError The format for bounds is ((lower bound of m, lower bound of c),(upper bound of m, upper bound of c))ģ. Bounds argument provides the bounds for our fitting parameters.Ģ. Let’s force the intercept ( c ) to be zero, and slope (m) to work between 1 and 3.Īlso, let’s plot the two set of fitted parameters we obtained along with the data we generated. We can force the curve_fit to works inside the bounds defined by you for the different fitting parameters. What if we want the line to pass through origin (x=0,y=0)? In other words, what if we want the intercept to be zero? Also, due to this noise the intercept is non zero. This is due to the random noise introduced in the data. The slope of the line provided by curve_fit is lower/higher than the input slope. Params, covariance = curve_fit(f = linear_fit, xdata = x, ydata = y) xdata and ydata are the x and y data we generated above. The first argument f is the defined fitting function.ĥ. Covariance returns a matrix of covariance for our fitted parameters.Ĥ. In our case first entry in params will be the slope m and second entry would be the intercept.ģ. Params returns an array with the best for values of the different fitting parameters. Using the curve_fit function to fit the random linear dataĢ. # Calling the scipy's curve_fit function from optimize moduleġ. The output will be the slope (m) and intercept ( c ) for our data, along with any variance in these values and any fitting statistics ($R^2$ or $\chi^2$). To use curve_fit for the above data, we need to define a linear function which will be used to find fitting. Scipy has a powerful module for curve fitting which uses non linear least squares method to fit a function to our data. Y = 2.39645 * x + np.random.normal(0, 2, 100)ĭummy values for a linear fit. # random.normal method just adds some noise to data

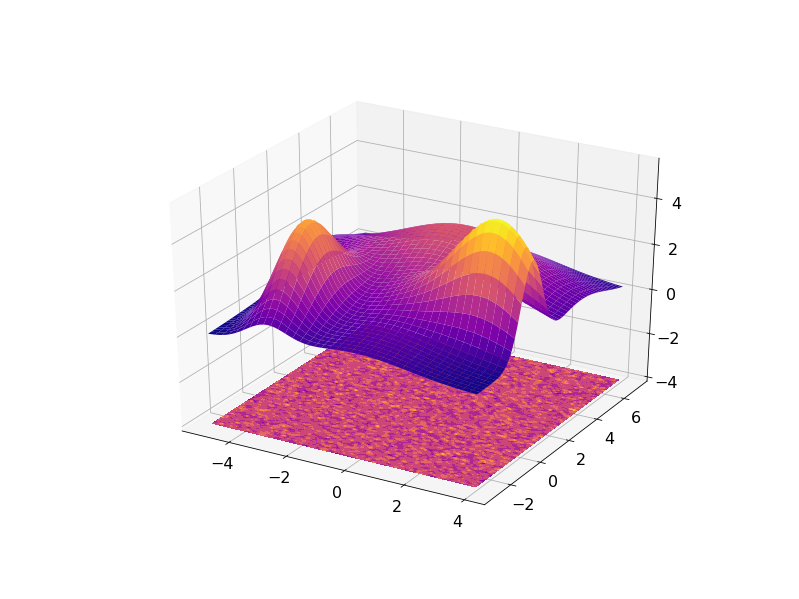

# Here the slope (m) is predefined to be 2.39645 # Importing numpy for creating data and matplotlib for plotting The y axis data is usually the measured value during the experiment/simulation and we are trying to find how the y axis quantity is dependent on the x axis quantity. Where, m is usually the slope of the line and c is the intercept when x = 0 and x (Time), y (Stress) is our data. Let’s generate some data whose fitting would be a linear line with equation: lmfit module (which is what I use most of the time) 1. This is where our best friend Python comes into picture.Ģ.

The Trendline option is quite robust for common set of function (linear, power, exponential etc) but it lacks in complexity and rigorosity often required in engineering applications. The easiest way to fit a function to a data would be to import that data in Excel and use its predefined Trendline function. Wouldn’t it be awesome if I could fit a line through this data so that I can predict/extrapolate the stress value at any given time? Well, one of the prime tasks in geotechincal engineering is trying to fit meaningful relationships to sometimes quite absurd and random data. Plot of stress vs time from my experiment.Īs soon as I see this data, I can tell that there is a non-linear (maybe exponential) relationship between time and stress. Let’s see the data from one of my experiments: The data from the experiments or simulations, exists as discrete numbers which I usually store as text or binary files.

- Blog

- Home

- Fifa street 3 xbox download

- Altium 10 license file

- 10-7 as a fraction

- Gambar kapal perang tni al

- Highway 2014 bengali film

- Yamaha yts 62 price

- Ae pixel sorter crack

- Dx4win lotw

- Iso for microsoft office 2003 professional

- Enable developer tab excel 2013

- Pokemon gba hacks 2020

- Philips smart card reader driver

- Cheap used blu ray movies

- Scipy optimize curve fit

- Vlc media player download

- Installing elgato hd60 pro

- God of war 1 pc space in hard disk

- Watch pirates of the caribbean stranger tides free

- Panotour pro 2-5 tutorial stitching

- Express scribe free version file types

- Microsoft silverlight download windows 7 64 bit

- How to change document size in word

- Best way to post video on reddit

- How to change screen on macbook pro

- Best free photo editing software windows 10

- P2 card reader best buy

- Free online screen sharing sites

- Magic bullet denoiser iii crack

- Teamviewer license code generator free

- The sims 4 spooky day and seasons